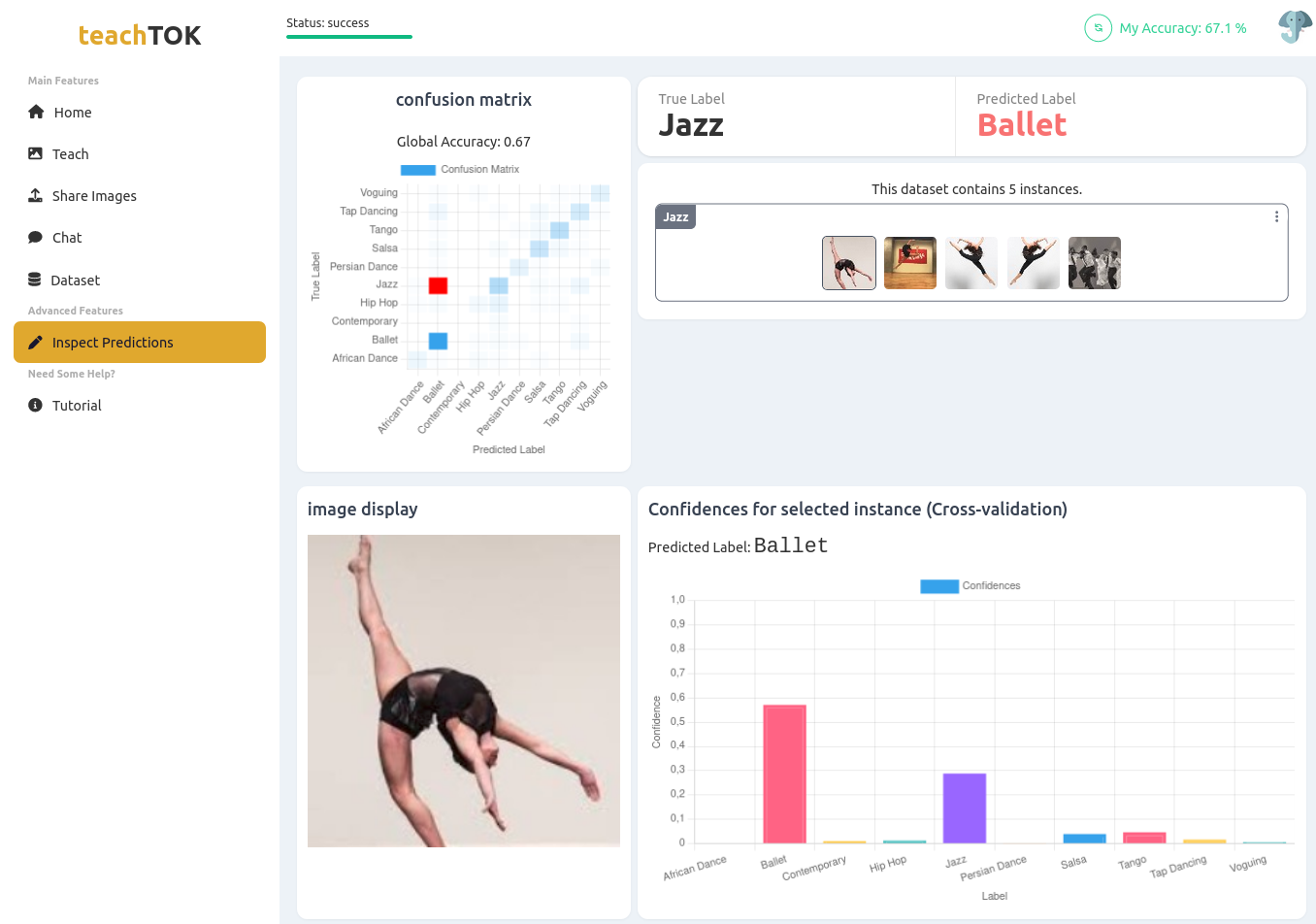

TeachTOK, a Collaborative Interactive Machine Teaching Application

TeachTOK was developed as part of the first project of my thesis on Collaborative Interactive Machine Teaching (CIMT). Built with the Marcelle Toolkit, TeachTOK supports a collaborative workflow in which users take on the role of teachers: they curate teaching examples, train an image classifier, and communicate and coordinate throughout the process. In our study, we used TeachTOK to investigate how users negotiate teaching strategies, balance different objectives, and collectively shape model behavior. The code is available on GitHub, and the application can be tried online. For more details on the design and implementation of TeachTOK, a paper is available (in French).

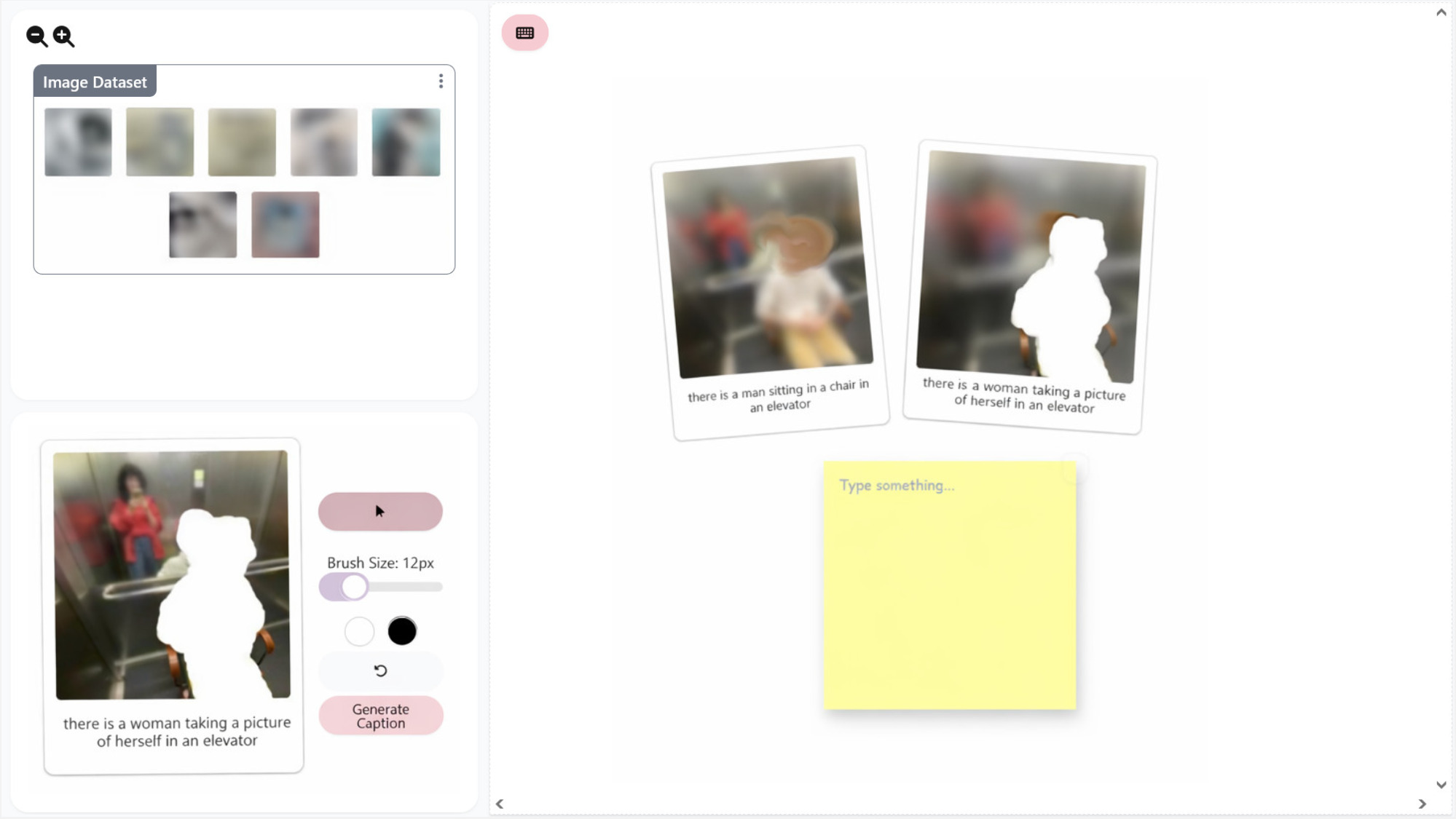

Image Captioning Auditing Interface

For my PhD project on Sensemaking in User-Driven Algorithm Auditing, I developed an interactive auditing interface that supports novice users in making sense of an image captioning model’s outputs, so that they can identify problematic behaviors such as patterns of gender bias. The interface enables users to explore an image dataset, test the model, and document their observations. In addition, it includes two specific auditing tools to scaffold sensemaking: the first lets users mask certain parts of an image and observe how the captions change accordingly. The second lets them filter the image dataset based on specific keywords they want to test in the captions. We studied how each of these auditing tools shaped what novice users noticed, and how they interpreted model behavior. The interface is available to try online, with source code on GitHub.

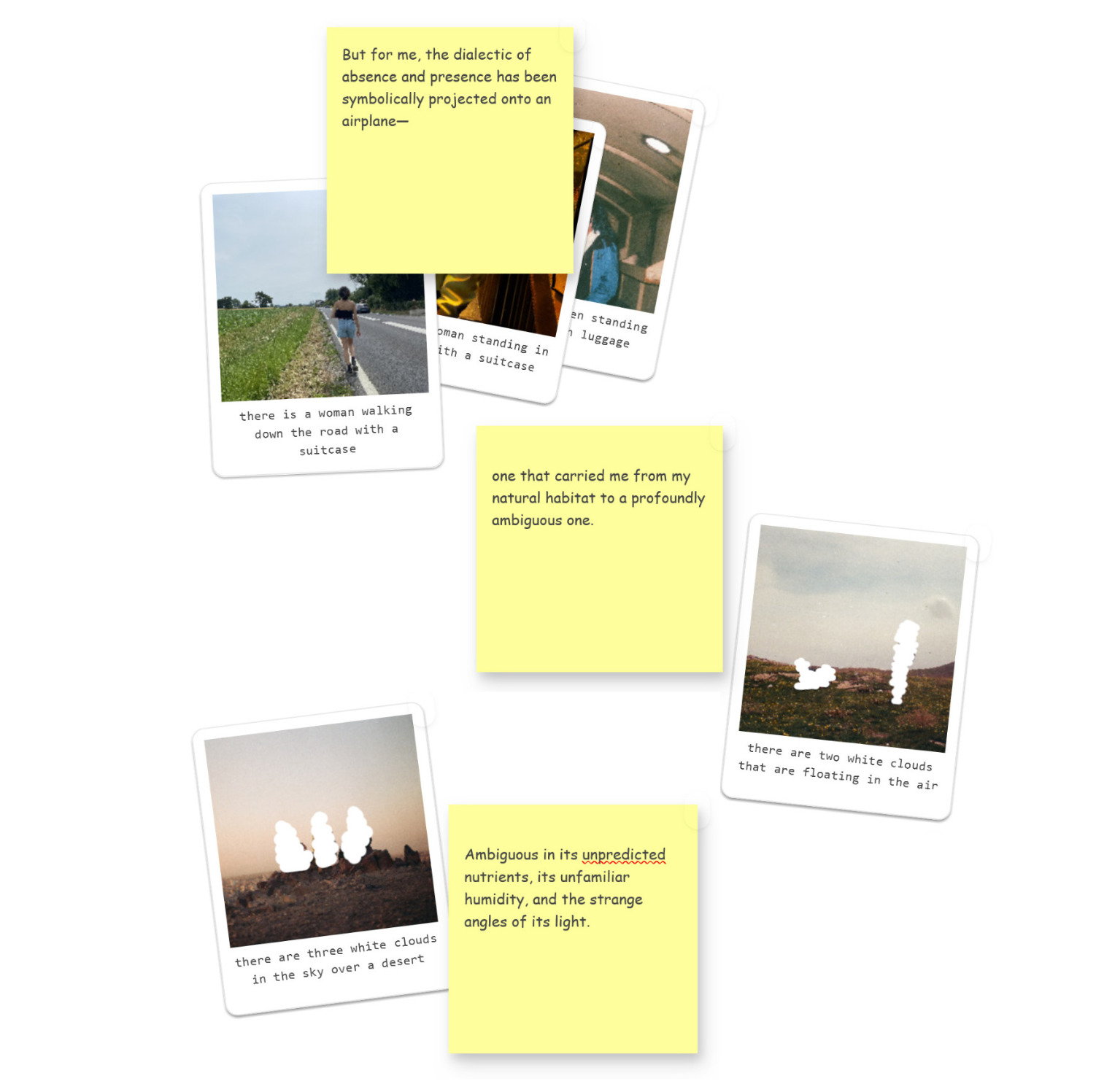

Designing for Friction

A tool to explore how moments of mismatch in human–AI interaction can support users in making sense of AI behavior. Instead of treating friction as a problem, this tool makes it something users can engage with and reflect through. It builds on a masking interaction that lets users “talk back” to an image captioning model by selectively removing parts of an image and observing how the model’s descriptions shift across variations. The tool combines this masking interaction with an affinity canvas where users can collect images, captions, and reflections, and progressively organize them into evolving narratives. I used this tool with my intimate photo archive in an autoethnographic audit to reflect on bias, identity, and diasporic experience. You can read more about it here. This work will be presented at the CHI 2026 Tools for Thought workshop. I plan to release the tool online soon.